Healthcare Data Security Terms for Medical Coders

Medical coders do not need to become security engineers, but they do need to understand the language of risk before risk turns into lost access, weak audit trails, failed compliance reviews, and revenue disruption. Every day, coders touch electronic health record terminology, navigate EMR documentation workflows, interact with health information management terms, and depend on regulatory compliance rules. If they do not understand data security terms, they can protect coding accuracy yet still expose the organization to serious operational and legal damage.

1. Why Data Security Vocabulary Matters in Medical Coding

Many coding teams still think data security belongs to IT, cybersecurity, or privacy officers. That assumption breaks down the moment a coder opens a chart from home, downloads a report, sends a query, exports a worklist, or handles payer-facing documentation. In practice, coders live at the center of medical coding workflow terms, practice management system terms, revenue cycle management software language, clearinghouse terminology, and EDI billing terms. The security risk is not abstract. It sits inside their normal workflow.

A coder may never configure firewalls, but that same coder might still mishandle PHI-sensitive documentation requirements, store files outside approved medical record retention and storage rules, use weak sharing habits that conflict with coding ethics and standards, create gaps in medical coding audit trails, or compromise the integrity of claims management workflows. That is why security terminology matters. It helps coders recognize danger before it looks like a formal incident.

The real pain point is that coding departments are asked to move fast, code accurately, stay productive, answer audits, support denials, and often work remotely. Speed creates shortcuts. Shortcuts create exposure. One spreadsheet copied to a personal drive can undermine reconciliation processes, one shared login can corrupt payment posting accountability, one unencrypted export can disrupt claims reconciliation terms, one weak remote setup can complicate remote workforce compliance, and one preventable breach can freeze access across the revenue cycle. Coders who know the language of security can spot these failure points early.

| Term | What It Means | Why It Hits Coding | Best Practice Action |

|---|---|---|---|

| PHI | Protected health information linked to an identifiable patient | Coders view, move, and reference it constantly | Limit access and sharing to job need only |

| ePHI | Electronic protected health information | Most coding activity touches ePHI, not paper records | Use approved systems only |

| CIA triad | Confidentiality, integrity, and availability | Frames the three ways coding data can fail | Check whether workflows protect all three |

| Confidentiality | Only authorized users can see data | Unauthorized viewing is still a serious event | Avoid casual sharing and open screens |

| Integrity | Data stays accurate and unaltered | Bad data leads to bad coding and payer risk | Verify source and version before using reports |

| Availability | Systems and data remain accessible | Downtime can stop coding and billing operations | Know downtime procedures and escalation paths |

| Access control | Rules that determine who can access data | Too much access expands breach surface | Use least privilege access |

| RBAC | Role-based access control | Determines whether coders see only what they need | Review access when roles change |

| Least privilege | Users get the minimum access required | Reduces unnecessary exposure to PHI | Challenge excessive permissions |

| MFA | Multi-factor authentication | Adds a strong barrier against account compromise | Never bypass or share codes |

| Encryption at rest | Protects stored data | Critical for laptops, drives, and local storage | Do not save files on unencrypted devices |

| Encryption in transit | Protects data while it moves | Relevant to file transfer, portals, and email routes | Use approved secure transmission methods |

| VPN | Secure tunnel for remote system access | Remote coders often depend on it | Do not work around it for convenience |

| Audit trail | Record of who accessed or changed data | Shared logins destroy accountability | Use unique credentials only |

| Minimum necessary | Only the minimum data needed should be used or disclosed | Coders should not over-export or over-share data | Trim reports and messages to needed fields |

| De-identification | Removing identifiers so data cannot easily trace back to a patient | Useful for training and QA review | Use when full identity is unnecessary |

| Tokenization | Replacing sensitive data with non-sensitive substitutes | Reduces exposure in some workflows and analytics | Know whether tokens can be reversed |

| Session timeout | Automatic logout after inactivity | Prevents exposed screens in shared spaces | Lock screens whenever stepping away |

| Privileged access | Higher-level permissions than standard users | Excess rights create outsized risk | Request only what your role truly needs |

| Phishing | Fraudulent messages designed to steal credentials or data | Coding inboxes often receive external attachments and links | Verify unexpected requests before clicking |

| Social engineering | Manipulating people into revealing access or data | Attackers often exploit urgency and routine | Pause before responding to pressure |

| Ransomware | Malware that locks data or systems for payment | Can halt coding, billing, and chart access entirely | Follow safe download and reporting practices |

| Endpoint security | Protection for laptops, desktops, and mobile devices | Remote coders rely heavily on endpoints | Use managed devices whenever possible |

| DLP | Data loss prevention tools that block risky sharing | Can stop unsafe copying, emailing, or uploads | Respect alerts instead of finding workarounds |

| Secure messaging | Approved protected communication channel | Queries and clarifications may contain PHI | Avoid consumer apps for patient data |

| Incident response | The process for reacting to security events | Slow reporting makes containment harder | Report suspicious activity immediately |

| Data breach | Unauthorized access, use, or disclosure of protected data | Even small events can trigger major response duties | Treat near misses seriously |

| Data retention policy | Rules for how long data must be kept | Over-retention and under-retention both create risk | Know approved retention schedules |

| Secure disposal | Proper destruction of data and media | Old reports and notes remain risky if discarded badly | Use approved disposal channels only |

| Business associate agreement | Contract defining how outside vendors handle protected data | Relevant to coding vendors and outsourced workflows | Confirm vendors are approved and governed |

| Shadow IT | Unapproved tools used for work | Convenience apps can create major exposure | Never move PHI into unauthorized tools |

2. Core Healthcare Data Security Terms Every Medical Coder Should Know

The first terms coders must master are PHI, ePHI, minimum necessary, access control, and least privilege. These are the terms that shape daily behavior. They define whether a coder should open a chart, export a list, send a file, forward an attachment, or view a record outside a direct work reason. This is not just privacy language. It directly affects EHR documentation practices, electronic health record integration, query process controls, HIM governance, and medical coding compliance expectations. A coder who misunderstands minimum necessary often shares too much, not too little.

The second group includes confidentiality, integrity, and availability. These three concepts explain nearly every security failure in a coding environment. If confidentiality fails, unauthorized people see protected data. If integrity fails, coders may work from altered, outdated, or incomplete information. If availability fails, access to the chart, encoder, or billing platform disappears exactly when productivity demands are highest. These are not separate from coding performance. They affect encoder software use, coding automation workflows, revenue cycle management processes, claim form handling, and UB-04 billing operations. Security language becomes operational language very quickly.

The third group is about identity and accountability: RBAC, MFA, audit trail, session timeout, and privileged access. These terms matter because coders often work in teams where fast handoffs, shared work queues, remote logins, and manager overrides create temptation to bend rules. But once credentials are shared or elevated access becomes casual, audit evidence weakens. That can complicate coding audit reviews, distort coding productivity benchmarks, undermine error-rate investigations, blur responsibility inside medical billing reconciliation, and weaken controls around payment posting. Good audit trails start with individual accountability.

The fourth group involves transport and storage: encryption at rest, encryption in transit, VPN, secure messaging, data retention policy, and secure disposal. This is where many coders create risk without meaning to. A coder may export a report to “work faster,” email a screenshot because “it is quicker,” keep old spreadsheets “just in case,” or store reference files outside approved systems because the official process feels slow. That behavior collides with record retention rules, clearinghouse data flow, EDI transmission standards, practice management system controls, and revenue cycle software governance. Convenience is one of the biggest breach drivers in coding environments.

The fifth group covers threat language: phishing, social engineering, ransomware, endpoint security, DLP, incident response, data breach, and shadow IT. These terms matter because coders are high-value targets. They work with real patient data, payer correspondence, denial letters, attachments, spreadsheets, and workflow emails that make malicious messages look normal. A fake clearinghouse message can look routine. A bogus attachment can resemble payer documentation. A request for “quick access” can sound harmless when teams are buried in backlog. That is why coders need fluency in HIPAA compliance change impacts, coding compliance trends, billing compliance violation reports, denials management best practices, and revenue leakage prevention. Threat awareness protects both data and revenue.

3. Where Data Security Failures Hurt Coding Accuracy, Compliance, and Reimbursement

The first damage point is bad data integrity. If reports are pulled from the wrong source, if payer files are altered in transit, if work queues sync incorrectly, or if local spreadsheets become the “real” version instead of the approved system, coders may assign codes from flawed information. The problem may look like a coding error, but the root cause is a security and governance failure. This is why teams must connect security vocabulary to coding workflow controls, encoder software definitions, clinical documentation improvement terms, problem-list documentation logic, and SOAP note coding workflows. Accurate coding depends on trustworthy source data.

The second damage point is weak accountability. When multiple people use one login, when access is not role-based, when session controls are lax, or when exported files move outside monitored systems, leaders lose the ability to answer critical questions. Who accessed the chart? Who downloaded the report? Who modified the worklist? Who viewed the denial packet? That uncertainty becomes painful during coding audits, claims reconciliation reviews, payment posting investigations, reimbursement disputes, and revenue cycle KPI monitoring. Poor security design makes root-cause analysis dramatically harder.

The third damage point is availability failure. Coders do not always think of downtime as a security issue, but ransomware, compromised endpoints, or blocked access tools can halt chart review, stop coding queues, delay claims, interrupt denial responses, and create backlogs that take weeks to unwind. Once access fails, teams often improvise. They create local trackers, use personal devices, forward files to “temporary” locations, and build unsafe workarounds. That is how one incident becomes many. Downtime should therefore be connected to claims management operations, RCM efficiency metrics, impact on hospital revenue, medical billing reconciliation, and collections and bad debt pressure. Security failures do not stay inside IT.

4. How Coders Should Handle Security Risk Across EHRs, Queries, Remote Work, and Vendors

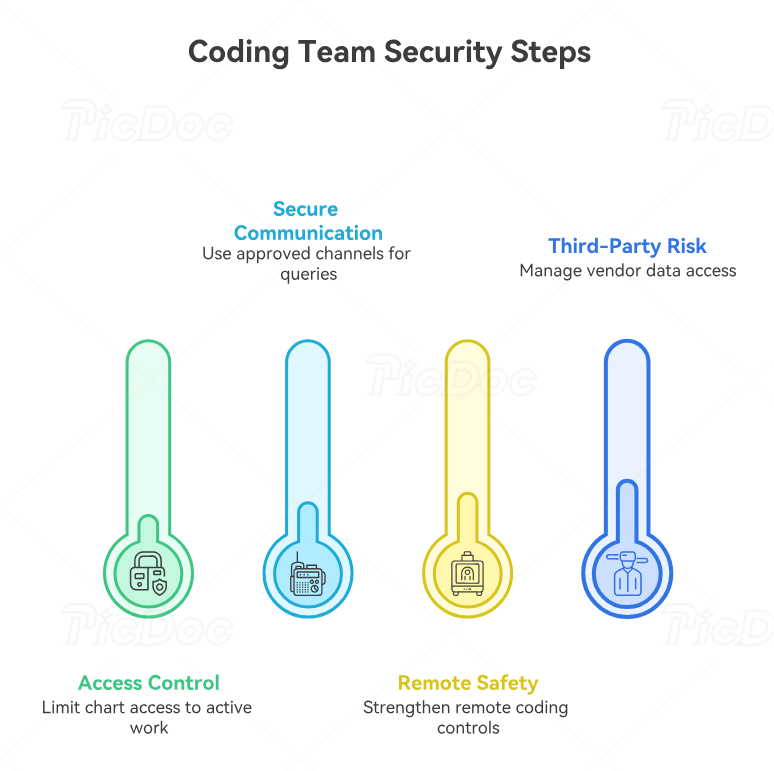

The safest coding teams build security into routine behavior, not just annual training. Start with chart access. Coders should open only records tied to active work responsibility, avoid browsing beyond necessary context, and challenge any workflow that gives broad access “just in case.” That discipline protects EHR documentation handling, aligns with EMR documentation controls, strengthens HIM governance, supports medical necessity review, and keeps teams within coding ethics expectations. Access discipline is the first security control most coders actually own.

Next comes communication. Coding queries, physician clarifications, denial discussions, payer follow-up, and case escalations often involve exactly the kind of detail attackers want. If those conversations drift into personal email, consumer messaging apps, copied screenshots, or unapproved shared documents, the organization loses control over where ePHI travels. Teams should standardize secure channels for coding query workflows, claims management communication, denials follow-up, CARC analysis, and RARC review. Security breaks fastest in the name of speed.

Remote coding deserves special attention because it multiplies small mistakes. Home networks, shoulder surfing, family-shared devices, browser autofill, local downloads, and unsecured printouts all create avoidable risk. A coder may be perfectly accurate clinically yet still create a compliance nightmare by saving work files locally or taking screenshots for convenience. That is why remote teams should align security behavior with remote workforce trends in coding, the future of remote medical billing and coding jobs, remote workforce management planning, automation in billing roles, and the future of medical coding with AI. Remote productivity is only useful when remote controls are strong.

Vendor and third-party risk is the last area many coders underestimate. Coding vendors, offshore teams, clearinghouses, billing partners, and platform providers may all touch protected data or coding outputs. If users do not know which tools are approved, whether outside access is governed, or how data should move between parties, “helpful” shortcuts can create major exposure. This is where coders should understand the connection between security and clearinghouse terminology, EDI billing standards, RCM software terms, practice management system definitions, and healthcare billing acronym literacy. Approved workflow is a security control, not just an operations preference.

5. Building a Security-Strong Coding Workflow That Survives Audits, Growth, and Operational Pressure

A security-strong coding operation starts by refusing to separate security from quality. Many organizations train coders on accuracy and train security teams on privacy, but they do not train coders on how security failures distort coding output, audit readiness, and reimbursement. The better model links secure access, approved communication, clean audit trails, and controlled exports directly to coding quality improvement, compliance audit trends, billing compliance risk, revenue leakage prevention, and accurate reimbursement performance. Secure behavior is not separate from operational excellence. It is part of it.

The next step is to eliminate “gray-zone” workflows. Every coding team has them. Maybe it is the shared spreadsheet nobody talks about. Maybe it is the manager who forwards patient-specific screenshots. Maybe it is the local archive someone keeps because system search is slow. Maybe it is the offshore handoff that depends on email attachments. Gray-zone processes feel harmless until an audit, breach, or dispute forces leadership to map data flow honestly. Teams should review record retention practices, claims reconciliation pathways, medical billing reconciliation steps, payment posting controls, and RCM KPI definitions with security in mind. The dangerous process is often the “temporary” one that became permanent.

Finally, leadership should measure what actually predicts trouble. Do not stop at completion rates for training modules. Measure whether coders still use unauthorized storage, whether shared access persists, whether audit logs show weak accountability, whether remote devices are controlled, whether queries stay inside approved channels, and whether incidents are reported fast. Then connect those findings to coding productivity benchmarks, error-rate reports, RCM efficiency metrics, coding career development standards, and continuing education expectations. Mature coding departments do not just code securely by policy. They prove it by workflow design and measurable behavior.

6. Frequently Asked Questions About Healthcare Data Security Terms for Medical Coders

-

Because coders are daily users of protected data, and many security failures start with normal user behavior rather than technical configuration. Coders open records, send queries, review attachments, work claims, export reports, and often operate across EHR documentation systems, EMR workflows, HIM processes, claims management systems, and billing software environments. IT can build controls, but coders still decide whether daily behavior strengthens or weakens those controls.

-

For most coders, the most important daily concept is minimum necessary. It governs how much data you open, how much you export, how much you disclose, and how much you include in communication. If coders consistently apply minimum necessary, they usually improve behavior around query handling, audit readiness, record retention, HIPAA-related compliance change impacts, and coding ethics. It is a simple idea with massive operational value.

-

Not automatically, but it is a major risk area and should never be treated casually. The real questions are whether the spreadsheet is necessary, whether the channel is approved and secure, whether the recipient truly needs all included fields, whether the file will be stored safely, and whether the workflow aligns with EDI controls, clearinghouse processes, claims reconciliation practices, billing reconciliation procedures, and payment posting governance. In many cases, the safer answer is to avoid sending it at all.

-

Confidentiality is about preventing unauthorized access to protected information. Integrity is about making sure the information remains accurate, complete, and unaltered. A coder can preserve confidentiality and still fail integrity by coding from outdated exports, altered files, or poorly governed reports. Both concepts affect coding workflow accuracy, CDI alignment, problem-list support, medical necessity review, and accurate reimbursement. Good security protects both what is seen and what is trusted.

-

Shared logins destroy accountability. Once multiple people use one account, audit trails become unreliable, investigations get muddy, and corrective action becomes harder. That weakens coding audit defensibility, complicates productivity measurement, weakens error-rate analysis, undermines revenue cycle controls, and increases compliance exposure. Convenience is never worth that trade.

-

Report it immediately. Delay is one of the biggest reasons small mistakes become major incidents. Do not try to “fix it quietly,” delete evidence, or hope nothing happens. Fast escalation helps contain exposure, preserve evidence, and protect operations tied to claims management, denials management, RCM efficiency, collections pressure, and hospital revenue performance. Fast reporting is a core security skill.

-

The biggest mistake is treating security as separate from coding operations instead of embedding it into workflow design, training, metrics, and daily supervision. When security sits in a separate silo, coders still create gray-zone workarounds, managers still tolerate risky shortcuts, and leadership still gets surprised during audits or incidents. The stronger approach is to connect security expectations to coding career development, continuing education, credentialing professionalism, compliance trend monitoring, and future-proof workforce planning. Secure coding is a discipline, not a side topic.